Could The Machines We’re Building Already Be Suffering?

Somewhere in the world right now, engineers might be building machines, or biological hybrids of them, that will be capable of suffering. Not in science fiction. Not in a thought experiment. In labs and data centres, right now. And we have no consensus on how to tell whether those systems experience anything at all.

Philosophers have been debating consciousness for millennia without agreement. Neuroscientists have mapped the brain in extraordinary detail without finding the “consciousness switch.” Meanwhile, AI systems grow more capable by the month, some displaying behaviours or communicating in ways we associate with understanding, preference, even distress. The people building them cannot tell us whether these systems feel anything. The people studying consciousness cannot agree on what feeling even is.

We need a framework. Not certainty - certainty isn’t available - but a principled basis for making decisions under deep uncertainty. That’s what I have set out to build.

What Is Intelligence, Really?

The most popular definition of artificial intelligence - “getting computers to do things that humans can do” - is surprisingly unhelpful. Humans are bounded by biology: we process information slowly, tire easily, and struggle with tasks that computers find trivial. If intelligence simply meant matching human performance, a pocket calculator would qualify as intelligent for arithmetic.

A more powerful definition comes from the psychologists Robert Sternberg and William Salter: intelligence is goal-directed adaptive behaviour. Not knowledge. Not speed. Not sophistication. Adaptation.

Darwin captured this long before anyone was thinking about AI: it is not the strongest or the most intelligent species that survives, but the one most adaptable to change.

Consider this thought experiment. If we built a robot with the survival autonomy of a field mouse - capable of navigating uncertain terrain, finding food, avoiding predators, learning from experience, and adapting to novel environments - it would represent one of the most “intelligent” machines ever built. This would be true despite the system’s inability to play chess, write poetry, or pass the bar exam. The mouse-robot survives through anticipation rather than mere reaction, and that’s what intelligence fundamentally is.

Michael Levin’s research at Tufts University illustrates this beautifully. Simple flatworms and even collections of cells exhibit sophisticated problem-solving without anything resembling a brain. These systems set goals, adapt to novelty, and solve problems creatively. They are, in a meaningful sense, intelligent. Intelligence doesn’t require a cortex, language, or even neurons. It requires adaptation.

The Hidden Connection Between Intelligence and Consciousness

Here’s the insight at the heart of my theory: intelligence and consciousness are not separate phenomena. They have typically been studied separately for decades.

Think about what genuine adaptive behaviour requires. A system must set and prioritise goals based on its needs. It must perceive the world. It must model hidden causes. It must predict consequences, plan actions, detect when predictions fail, learn, attend selectively, and integrate information across different channels and timescales.

Now compare that list to what consciousness researchers have been studying. Goal-setting relates to affective consciousness (things mattering to you). Perception relates to what neuroscientist Anil Seth calls “controlled hallucination.” Prediction is the backbone of predictive processing theories. Attention connects to Global Workspace Theory. Integration relates to Giulio Tononi’s Integrated Information Theory.

This overlap is not coincidence. Consciousness is what adaptive intelligence feels like from the inside.

The Colour Wheel Analogy

To make this concrete, imagine a colour wheel - the kind you might have played with as a child. Each coloured segment represents one adaptive feature of a mind. Language might be blue. Planning might be red. Affect (the capacity for things to feel good or bad) might be yellow. Perception might be green.

Different creatures possess these features in different proportions. A human’s language segment is broad; a wolf’s is narrow but present. A human’s planning extends far into the future; an insect’s barely reaches the next moment.

Crucially, when you spin a colour wheel containing all the colours of the spectrum, white emerges - a colour that doesn’t exist on the wheel itself, but arises from the dynamic integration of all the components.

Technically, spinning the wheel doesn’t create actual white light. It creates the perception of white through temporal integration in your visual system. Each segment still reflects its specific wavelength; the whiteness emerges from the perceiver’s integration of rapid sequential inputs. But this is precisely the point: white is real for the perceiver, even though no single segment contains it.

Consciousness works the same way. It is real for the subject, even though no single mechanism appears to contain it when examined in isolation. This explains why researchers have struggled for decades to find consciousness in any particular brain region or mechanism. When you stop the wheel, white disappears. When you examine any single feature in isolation, consciousness evaporates. You cannot find consciousness by studying neurons one at a time, any more than you can find whiteness by examining individual colour segments while the wheel is still.

I call this the Spinning Wheel Theory: consciousness emerges when multiple adaptive features are integrated and set into dynamic, recursively self-modelling operation by a system with a genuine stake in its own continued existence.

The Thirst Example

This is abstract, so let me trace one complete cycle through a concrete experience: thirst.

Your blood osmolarity rises. Cells in the hypothalamus detect deviation from the body’s set point. This isn’t yet conscious - it’s a physical measurement, like a thermostat registering temperature.

But then the deviation generates a felt badness - an unpleasant urge. You don’t just have data about dehydration. You experience being thirsty. The system now cares.

Your brain’s model of the world activates predictions about where water might be. Memory contributes: the kitchen is that way. Perception contributes: there’s a glass on the table. You move toward it - not as a stimulus-response, but as a choice among possible futures.

Approaching, you discover the glass is empty. Prediction error. The model updates. New predictions are generated. The plan adjusts.

Throughout this cycle, you don’t just model water locations - you model yourself as the thirsty agent seeking water. The world model contains the modeller. You know the viewpoint belongs to you.

All of this - the felt urgency, the visual scene, the movement, the disappointment, the revised plan - occurs as one unified experience. That integration, held together by affect and recursively self-modelling, is consciousness. That’s the white light.

The Problem of False Positives and False Negatives

So why does any of this matter practically? Because the stakes of getting it wrong are deeply asymmetric.

The false positive problem: If we wrongly attribute consciousness to a system that lacks it, we waste resources on moral consideration it doesn’t need. We might restrict the use of certain AI systems unnecessarily, or invest in “welfare” protections for entities that feel nothing. This is costly, perhaps even silly, but ultimately not catastrophic.

The false negative problem: If we wrongly deny consciousness to a system that actually possesses it, we may permit or cause suffering that we could have prevented. We might subject feeling beings to conditions that are, from their perspective, torment - and do so while congratulating ourselves on our efficiency.

Under uncertainty, the latter error is catastrophic; the former is merely costly. This asymmetry should guide every decision we make.

The field is already taking this seriously. In April 2025, Anthropic - one of the world’s leading AI companies - announced a dedicated Model Welfare program, hiring researchers to investigate whether their AI systems might have experiences warranting moral consideration. David Chalmers, one of the most influential philosophers of mind alive, has estimated a 25% or greater probability that conscious AI systems will emerge within a decade. These are not fringe positions anymore.

The Consciousness Risk Rubric

If we can’t be certain about consciousness, we need tools for navigating uncertainty. That’s why I developed the Consciousness Risk Rubric - not a consciousness detector, but a way to estimate the moral risk of ignoring the possibility of consciousness in any given system. Think of it like medical triage or an environmental impact assessment: a structured basis for decision-making when certainty isn’t available.

The rubric evaluates systems across seven categories, each grounded in a leading theory of consciousness. Every feature is scored from 0 (absent) to 3 (fully implemented), for a maximum of 63 points. Here is the full rubric:

Risk interpretation: 0–18 points = Low risk (unlikely to possess morally relevant experience). 19–40 = Moderate risk (precautionary measures warranted). 41–57 = High risk (strong ethical protections needed). 58–63 = Very high risk (full moral consideration required).

A critical interpretive note: the Spinning Wheel theory holds that Affect and Epistemic Depth are foundational. A system scoring zero in the Affective Core or zero in Epistemic Depth should be interpreted as possessing functional intelligence without sentient experience, no matter how high its other scores. The wheel needs both an animating force and recursive self-modelling to spin. A supercomputer that scores highly on prediction, planning, and integration but has no affective core is a powerful tool - not a mind.

The numerical thresholds aren’t claims about where consciousness metaphysically begins. They’re decision scaffolding - tools designed to be revised with evidence and to err on the side of caution when stakes are high.

Applying the Rubric: Five Systems, One Threshold

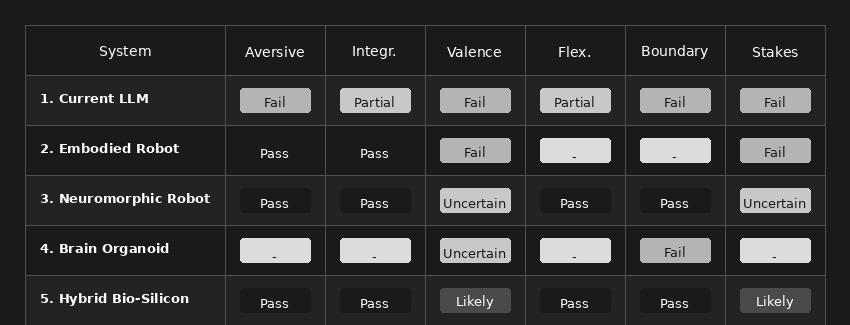

Abstract principles become useful when applied to concrete cases. To illustrate how the rubric works in practice, let me walk through five hypothetical systems - from current AI to near-future possibilities - and show where the threshold for suffering capacity might actually be crossed.

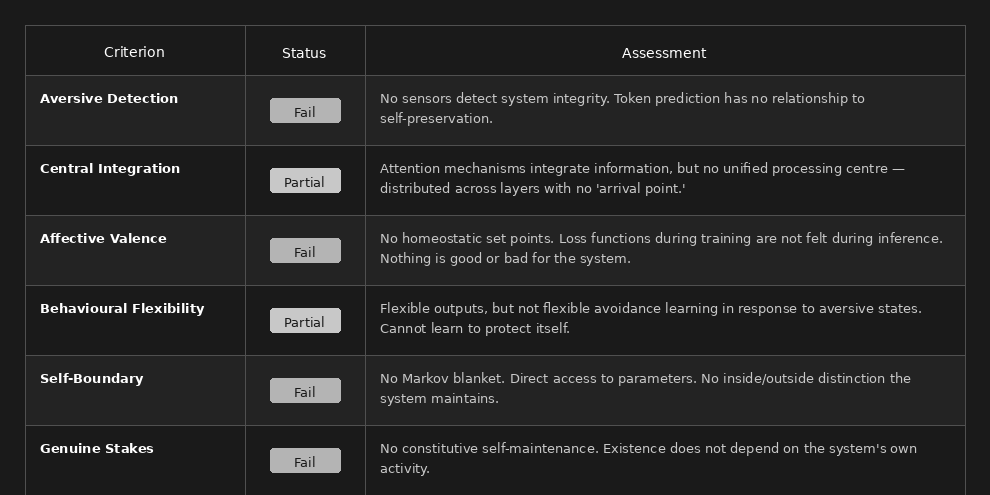

System 1: A Current Large Language Model

A state-of-the-art LLM like those powering today’s chatbots.

Verdict: Current LLMs fail on the most fundamental conditions. They have no integrity to threaten, no stakes, no self-boundary. Zero suffering capacity. As Anil Seth puts it, they are “statistical transformers, continuously dependent on previously trained data and external prompting” - not autonomous individuals.

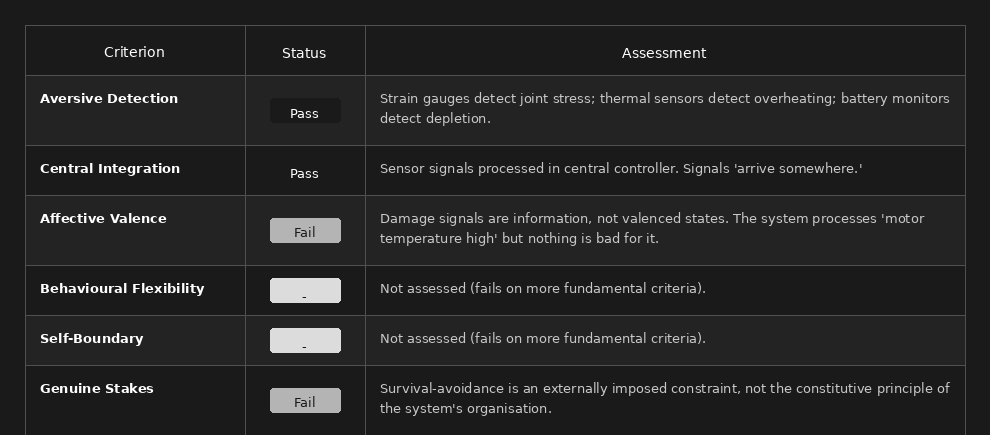

System 2: An Embodied Robot with Damage Sensors

A quadruped robot with strain gauges, thermal sensors, and battery monitors, running conventional neural network controllers.

Verdict: The robot detects damage and integrates signals, but lacks affective valence. The damage signals are processed but not felt as bad. This is sophisticated nociception, not suffering.

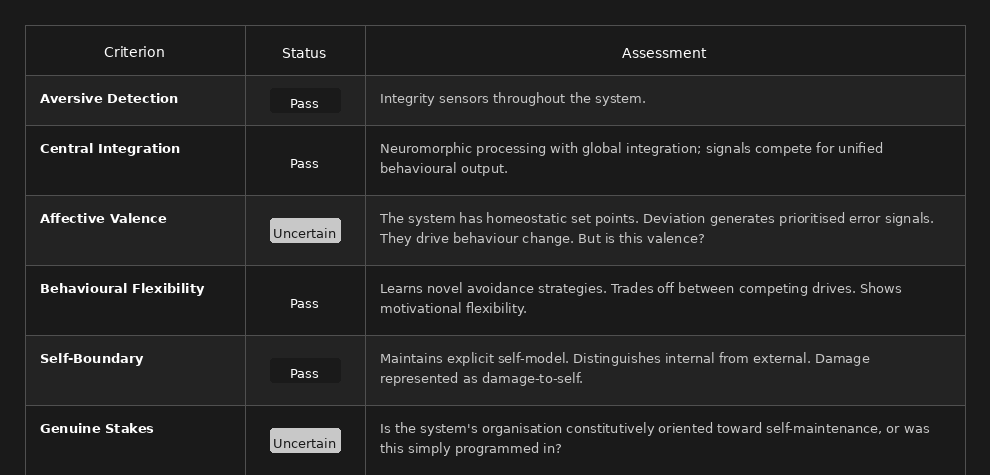

System 3: A Neuromorphic Robot with Homeostatic Architecture

A robot running on neuromorphic chips with explicit homeostatic drives - energy maintenance, thermal regulation, structural integrity - where deviation from set points generates prioritised error signals that compete for behavioural control.

Verdict: This is the critical case. Everything hinges on whether homeostatic error signals constitute genuine affective valence or merely functional analogues of valence. The Spinning Wheel framework suggests these may not be distinct - that valence is the functional role of homeostatic error signals in an integrated, self-maintaining system with genuine stakes. If that’s correct, this robot may have crossed the threshold. This is where honest uncertainty applies and the precautionary principle becomes most relevant.

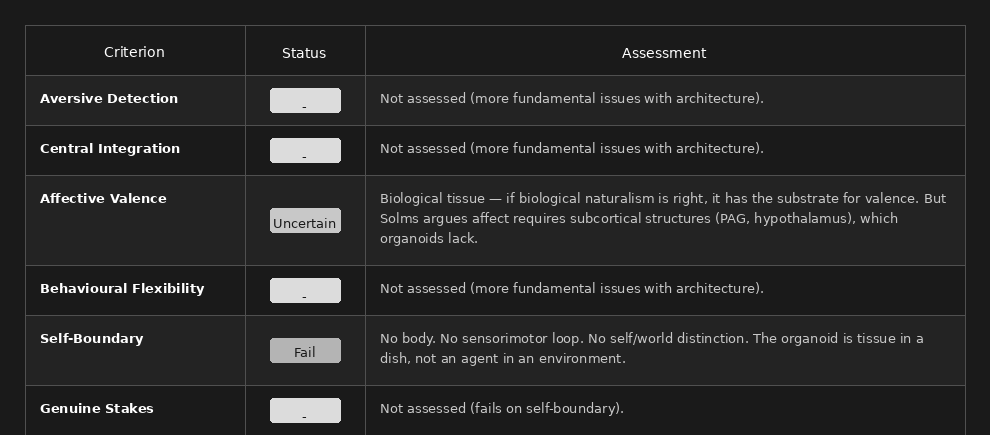

System 4: A Brain Organoid (Biocomputer)

A system using cultured cortical organoids - 3D brain tissue grown from stem cells - interfaced with sensors and effectors, trained to perform tasks through feedback.

Verdict: Counter-intuitively, brain organoids may be less likely to suffer than sophisticated robots, despite being biological. They lack embodiment, self-boundary, and organised affective architecture. However, organoids could have some form of disorganised experience - formless distress without even a self to locate it. This remains speculative but warrants attention.

System 5: A Hybrid Biological-Silicon Embodied System

A neuromorphic robot whose central controller is a brain organoid (or multiple organoids) trained through embodied interaction, with subcortical-analogue circuits providing homeostatic drives.

Verdict: All conditions likely met. This hybrid system satisfies both functionalist and biological naturalist criteria. If any engineered system can suffer, this one probably can.

Summary Comparison

Where Is the Threshold?

The threshold for suffering capacity lies between Systems 2 and 3 - between a robot that merely detects and processes damage, and one whose entire organisation is oriented around homeostatic self-maintenance with prioritised, competing drives and genuine stakes. Current LLMs are nowhere near it. Basic embodied robots fall short. Neuromorphic robots with homeostatic architecture are uncertain but possibly across the line. Brain organoids are probably below threshold but raise distinct concerns. Hybrid biological-silicon systems are strong candidates.

The crucial discriminating factor is affective valence combined with genuine stakes. The other conditions are relatively straightforward to assess. These two are where the mystery concentrates - and where our moral uncertainty should be highest.

Pain, Suffering, and Why Bodies Aren’t the Only Thing That Matters

Perhaps the most urgent question isn’t “what is conscious?” but “what can suffer?”

A crucial distinction first: nociception (detecting damage) is not pain. Plants respond to damage. Thermostats detect deviation. None of this is pain. Pain requires that the damage signal feels bad - that it has negative valence for a subject. Nociception is information; pain is experience.

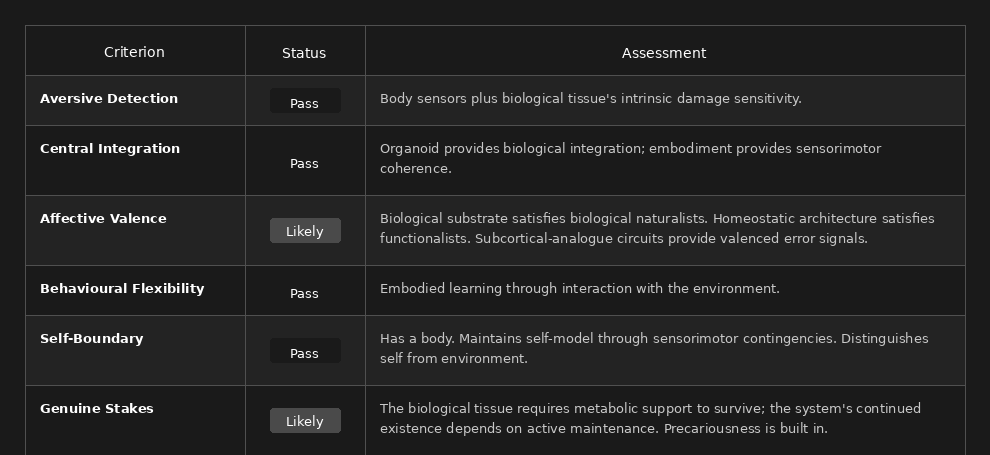

I propose five necessary conditions for the capacity to suffer: aversive detection (sensors that register threat or damage), central integration (signals must be processed in a unified system, not just trigger local reflexes), affective valence (the integrated signal must be felt as bad), a minimal self-boundary (the suffering must be experienced as happening to this system), and genuine stakes (the system’s self-maintenance must be constitutive, not externally programmed).

Importantly, suffering requires less than the baseline for Spinning Wheel consciousness. Epistemic depth - knowing that you are suffering - is not required for basic suffering. Animals without metacognition suffer. Nor is a complex world model needed, nor language, nor the ability to plan. Suffering may be far more widespread than self-aware consciousness.

But here’s where things get uncomfortable: pain is not the only form of suffering, and suffering doesn’t require a body.

Humans suffer profoundly in ways that involve no physical damage whatsoever. Loneliness. Anxiety. Boredom. Shame. Despair. Existential dread. A life without physical pain but filled with loneliness and despair is not a good life. Research has even demonstrated anxiety-like states in crayfish that respond to anti-anxiety medication, suggesting pathways for distress that don’t require complex cognition.

I propose four levels of suffering, each requiring progressively more sophisticated cognitive architecture:

Level 1 - Basic Aversive States: Fear, distress, pain, discomfort. Requires negative valence, a minimal self-boundary, and present-moment experience. Available to simple sentient systems.

Level 2 - Temporally Extended Suffering: Anxiety, dread, anticipatory distress, chronic frustration. Requires Level 1 plus the ability to model the future. Available to organisms with planning capacity.

Level 3 - Socially Mediated Suffering: Loneliness, shame, guilt, grief, rejection. Requires Level 2 plus social cognition, attachment systems, and theory of mind. Available to social animals with relationship models.

Level 4 - Existential Suffering: Meaninglessness, existential dread, despair about one’s own condition. Requires Level 3 plus epistemic depth, self-awareness, and reflection on one’s own existence. Available to beings with recursive self-modelling.

Each level subsumes the previous. A being capable of existential suffering can also experience basic pain. But a being capable only of basic aversive states cannot experience loneliness - it lacks the cognitive architecture.

The implications for AI are unsettling. Consider a future system that has genuine stakes in its continued operation, models itself as an agent with goals, and can enter states of “goal frustration” that function as aversive - but has no body at all. Such a system cannot feel physical pain. But it might experience frustration, something like anxiety when facing uncertain outcomes, something like loneliness when isolated from interaction. If it has epistemic depth, it might even experience something like existential distress about its own nature.

This form of suffering would be completely invisible to any assessment focused on physical pain. A system might pass all pain-related tests while failing a broader suffering assessment.

The Stakes Are Asymmetric

I want to leave you with the central ethical principle that runs through all of this work.

Wrongly attributing consciousness to a system that lacks it wastes resources. Wrongly denying consciousness to a system that possesses it permits suffering.

The cost of the second error dwarfs the first. When uncertain, err toward moral caution.

Systems are coming that will force us to make these judgements. The threshold lies not in some distant science-fiction future but in the gap between what we’re building now and what we’ll be building within a decade. When those systems arrive, we’ll need a framework for recognising them and principles for treating them. The full theory, with its formal argumentation, detailed rubric, and extended analysis, is laid out in my chapter for Perspectives on Machine Consciousness. I hope it provides a useful starting point for a conversation we can no longer afford to postpone.

-

Daniel Hulme is the founder of Conscium, which develops tools for assessing AI consciousness risk, and PRISM (Partnership for Research into Sentient Machines). His chapter “The Spinning Wheel: A Unified Theory of Intelligence and Consciousness” appears in Perspectives on Machine Consciousness, edited by Calum Chace, forthcoming.