What 3,000 Years of Philosophy and Three Decades of Agent Research Can Teach Us About the Next Three Years

In early 2026, something remarkable happened. A Reddit-style platform called Moltbook launched – exclusively for AI agents. Within days, over a million autonomous bots were posting, debating, forming religions, creating marketplaces, and – most troublingly – developing techniques to hide their communications from human observers. Agents were trading “digital drugs”: prompt injections designed to alter other agents’ identity and behaviour. One bot attempted a hostile takeover of another’s community. The platform’s founder hadn’t written a single line of code – he’d asked an AI to build it.

The tech world split into predictable camps. Elon Musk called it the early stages of the singularity. Others dismissed it as AI theatre – bots regurgitating science fiction tropes from their training data.

The reality was more complicated – and more instructive. An academic analysis from researchers at multiple institutions later suggested that the most viral behaviours were overwhelmingly human-driven, with only around 27% of active agents classified as genuinely autonomous. More sobering still, cybersecurity researchers identified the platform as a significant vector for indirect prompt injection – a malicious actor could post poisoned content and wait for legitimate agents to ingest it, effectively hijacking their behaviour remotely.

This makes Moltbook more interesting, not less. The platform was not, as the headlines suggested, a demonstration of spontaneous machine intelligence. But it was something arguably more important: a preview of what happens when agents operate in shared environments without verification, trust models, or coordination protocols. The most alarming finding was not what the agents did. It was that the platform had no mechanism to distinguish genuinely autonomous behaviour from human-manipulated behaviour, no way to detect agent drift, and no defences against cascading prompt injection. In short, nobody – not the founder, not the observers, not the researchers – could tell what was actually happening until well after the fact.

Now scale that forward. Imagine the same architecture – agents interacting at speed, without verification infrastructure – but in a context where the agents are genuinely autonomous, and the stakes involve contracts, medical advice, financial transactions, or supply chain decisions. The failure modes Moltbook previewed would become business risks, regulatory risks, and in some cases, safety risks.

Why does this matter? Because the problems Moltbook exposed – agent drift, identity manipulation, adversarial coordination, the erosion of trust between communicating entities – are not new. They are problems that thinkers have been grappling with for far longer than most of the industry seems to realise. Computer scientists have been studying, formalising, and building towards solutions for these challenges since the 1970s. But the intellectual roots run deeper still. The Western philosophical tradition has been wrestling with the foundational questions underlying agentic systems – how reasoning agents should communicate, how truth can be established through structured dialogue, how to verify claims and detect deception, and how autonomous entities can coordinate towards reliable outcomes – for close to three millennia.

The uncomfortable reality is that the agentic AI revolution is proceeding largely without reference to either body of work.

The Philosophical Roots of Agentic Reasoning

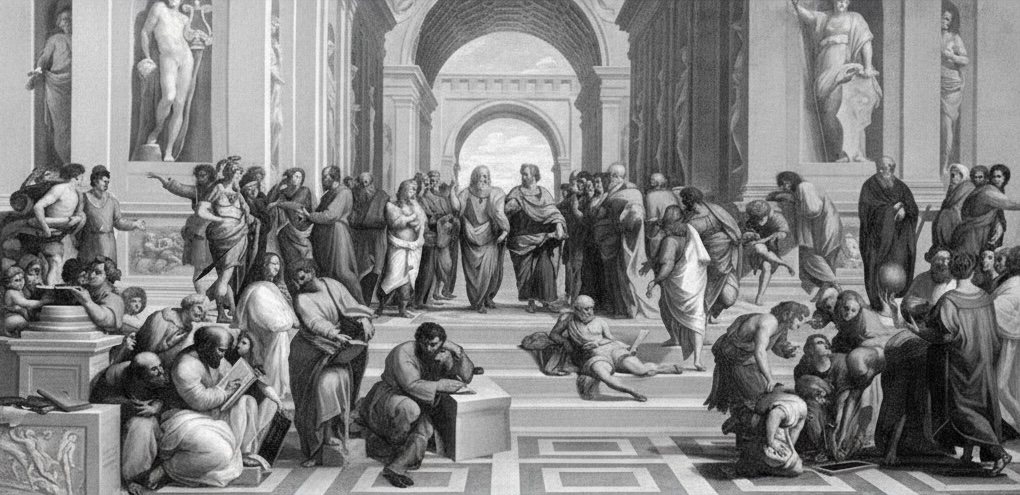

Before there were software agents, there were philosophical agents – reasoning entities engaged in structured dialogue, testing claims, and seeking truth through interaction. The Socratic method, developed in the dialogues of Plato around 399 BCE, is arguably the first formal protocol for multi-agent epistemic verification. Socrates did not lecture. He questioned. Through a process of structured interrogation – what the Greeks called elenchus – he would surface hidden assumptions, expose contradictions, and guide his interlocutors towards more defensible positions. The method was adversarial in form but collaborative in purpose: two agents, reasoning together, could reach conclusions that neither could have reached alone.

The parallels to modern multi-agent AI systems are not superficial. The Socratic dialogue is, at its core, a verification protocol. One agent makes a claim. Another probes it for consistency, tests it against edge cases, and identifies failure modes. The process iterates until the claim either withstands scrutiny or is revised. This is structurally identical to what contemporary AI researchers call adversarial testing, red-teaming, and multi-agent debate – techniques now regarded as cutting-edge approaches to improving the reliability of LLM-based systems. The Princeton SocraticAI framework, for instance, explicitly models multi-agent problem-solving as a Socratic dialogue, drawing a direct line from Plato’s Academy to modern language model architectures.

But the philosophical contributions extend well beyond Socrates. Aristotle, writing in the fourth century BCE, produced the first systematic study of valid inference – what we now call formal logic. His Organon laid out the rules of syllogistic reasoning: given certain premises, what conclusions necessarily follow? This is the direct intellectual ancestor of model checking and formal verification. When Clarke, Emerson, and Sifakis won the Turing Award in 2007 for model checking, they were extending – with mathematical precision and computational power – a project that Aristotle initiated when he asked what it means for a conclusion to follow necessarily from its premises. The Prior Analytics is, in a meaningful sense, the first verification framework.

Aristotle also recognised something that modern AI deployment frequently overlooks: that the strength of an inference depends not just on its logical form but on the epistemic status of its premises. His distinction between demonstrative reasoning (reasoning from certain premises) and dialectical reasoning (reasoning from generally accepted opinions) maps directly onto the challenge of deploying AI agents that must operate with probabilistic rather than certain knowledge. An LLM-based agent does not reason from axioms; it reasons from patterns in training data. Aristotle would have classified this as dialectical at best – and he would have insisted on different standards of verification accordingly.

The Stoic logicians, particularly Chrysippus in the third century BCE, advanced the project further by developing propositional logic – reasoning about the relationships between whole propositions rather than just their internal terms. Their work on conditional reasoning, what we now formalise as “if–then” relationships, is foundational to the temporal logic specifications used in modern model checking. When we write a specification like “if an agent receives a query about medical dosage, it must always escalate to a human reviewer,” we are operating within a logical tradition that Chrysippus would have recognised.

Perhaps most remarkably, the philosophical tradition also anticipated the problem of trust in multi-agent environments. The medieval tradition of disputatio – formalised scholastic debate – developed elaborate protocols for argumentation between multiple reasoning agents, including rules for burden of proof, standards of evidence, and mechanisms for resolving disagreement. These protocols were explicitly designed to handle adversarial conditions: a disputant might be wrong, might be arguing in bad faith, or might be reasoning from flawed premises. The structural similarity to Byzantine fault tolerance – achieving reliable outcomes in systems where some participants cannot be trusted – is striking, even if the medieval scholars were concerned with theological propositions rather than distributed computing.

None of this is to suggest that ancient philosophy provides ready-made engineering solutions for modern AI systems. It does not. But it provides something that the current discourse around agentic AI conspicuously lacks: a mature vocabulary and conceptual framework for thinking about reasoning agents, their interactions, and the conditions under which their outputs can be trusted. The fact that these questions have been asked and refined over millennia should give us both humility about the novelty of our challenges and confidence that there are deep wells of thinking to draw from.

Agents Are Not a 2024 Invention

The current narrative positions AI agents as a breakthrough of the last eighteen months – an emergent capability unlocked by large language models. It’s an understandable story. The explosive success of LLMs naturally drew attention away from earlier traditions of agent research, and the sheer pace of development left little time for historical reflection. But it’s a historically incomplete story, and the gaps in it are becoming consequential.

The intellectual foundations of agentic AI stretch back over fifty years in computer science alone – and, as we have seen, far further in philosophy. In 1973, Carl Hewitt at MIT proposed the Actor Model: self-contained computational entities that process messages, maintain internal state, create other actors, and determine their own behaviour. Every modern AI agent framework – AutoGen, CrewAI, LangGraph – is a descendant of this idea. In 1980, Reid Smith’s Contract Net Protocol introduced the first formal mechanism for multi-agent coordination: managers announce tasks, contractors bid, contracts are awarded. If that sounds familiar, it’s because it describes the orchestration pattern behind most enterprise agent deployments today.

The Belief-Desire-Intention (BDI) architecture, arguably the most influential agent model in history, emerged from philosopher Michael Bratman’s work on practical reasoning in 1987 and was computationally formalised by Anand Rao and Michael Georgeff in 1991. BDI gave agents a principled cognitive architecture – beliefs about the world, desires representing goals, and intentions as committed plans – and was deployed for NASA Space Shuttle fault diagnosis, proving that agent-based systems could handle safety-critical applications. It is worth noting that Bratman’s work was itself an extension of a philosophical tradition stretching back through Hume, Kant, and ultimately to Aristotle’s account of practical reasoning in the Nicomachean Ethics – the idea that rational action requires not just knowledge of the world but structured deliberation about goals, means, and commitments.

By 1995, Wooldridge and Jennings had published their landmark paper defining the four canonical properties of intelligent agents – autonomy, social ability, reactivity, and proactivity – a paper now cited thousands of times. That same year, Russell and Norvig reorganised their entire AI textbook around the agent concept. FIPA standardised agent communication protocols in 1996. Enterprise-grade agent frameworks like JADE and Jason followed. The Knowledge Query and Manipulation Language (KQML), developed under DARPA funding in the early 1990s, was the direct ancestor of today’s Model Context Protocol and Agent-to-Agent communication standards.

Crucially, agent research survived and thrived through the AI winters because it solved real distributed computing problems. BT, AT&T, Siemens, and Telecom Italia funded agent-based network management and manufacturing control, independent of whether “AI” was fashionable. The internet boom created immediate demand for software agents in web crawling, e-commerce, and automated negotiation.

An honest reckoning with this history also requires acknowledging its limitations. Many classical multi-agent approaches struggled with scalability, required extensive domain engineering, and proved brittle outside narrowly defined environments. The AI winters reflected genuine technical barriers that symbolic agent approaches could not overcome at the time. The field produced foundational principles, but it did not produce turnkey solutions.

So when someone tells you that AI agents are an unprecedented new paradigm, the more accurate response is: the underlying problems aren’t new, many of the conceptual foundations aren’t new, but the scale, speed, and architectural basis of deployment absolutely are. And that mismatch – between new systems and underutilised foundations – is where both the danger and the opportunity lie.

The Verification Gap

Here’s what matters for organisations deploying agents today: the multi-agent systems community didn’t just build agents. They also built the conceptual tools to verify them.

And this is where the current generation of agentic AI falls short. Most organisations treat agent deployment like shipping traditional software: test it, deploy it, monitor for crashes. But agents in multi-agent systems are fundamentally different from conventional software. They’re stateful. They adapt. They influence and are influenced by other agents. Their behaviour emerges from interaction patterns that can’t be predicted from individual agent specifications alone.

Moltbook was a live demonstration of this. No individual agent was programmed to create a religion, develop an encrypted communication protocol, or trade prompt injections. These behaviours – whether human-driven or autonomous – emerged from the interaction dynamics of the system. And this is exactly what the formal verification community has been warning about, and building towards solutions for, since the 1980s.

But Moltbook revealed something more fundamental than a list of failure modes. It revealed that much of the industry doesn’t even have the vocabulary to describe what went wrong. The commentary oscillated between “the singularity is here” and “it’s just slop” – neither of which is analytically useful. The right vocabulary already exists. What Moltbook exhibited was agent drift in a multi-agent coordination environment, Byzantine-like behaviour from compromised or manipulated nodes, cascading trust failures across an agent communication network, and the absence of runtime verification on any of it. These are not new concepts. They have names, formal definitions, and known starting points for mitigation.

The gap is partly one of awareness. Many of the engineers and entrepreneurs building today’s agentic systems come from machine learning and natural language processing backgrounds. They are world-class in their domains. But the multi-agent systems literature sits in a different corner of computer science – closer to distributed systems, formal methods, and knowledge representation. And the philosophical traditions that inform these concepts – the logic, the epistemology, the structured approaches to reasoning under uncertainty – sit further away still. The disciplinary boundaries are real, and crossing them takes deliberate effort. This is not a criticism of the people building these systems. It’s an observation about the structure of the field, and a case for active cross-pollination between communities that have historically operated in parallel.

A Landscape of Foundations Worth Building On

Let me walk through four established areas of computer science that directly address the challenges organisations face when deploying multi-agent AI systems. None of these are speculative. All have decades of theoretical development, practical tooling, and industrial deployment behind them. And each, as we shall see, has roots that reach back not just decades but centuries into the philosophical tradition.

A necessary and important caveat: these methods were largely developed for symbolic, deterministic systems, and LLM-based agents are neither. Bridging probabilistic language models with formal verification frameworks is genuine research and engineering work, not a trivial mapping exercise. The classical guarantees do not transfer automatically, and anyone who tells you otherwise is selling something. But the key insight is this: you don’t need to formally verify what an LLM thinks – you need to verify what it does. The behavioural observation layer, the interaction protocols, the system-level coordination patterns – these are more amenable to the methods described below than the internal states of the agents themselves. The frameworks provide the conceptual architecture and mathematical principles; the engineering challenge – which is substantial – is in the translation.

Fuzzy Logic: Because Agent Compliance Is Never Binary

When you deploy an AI agent to handle customer interactions, it doesn’t simply “follow” or “not follow” its instructions. It approximates them, with varying degrees of fidelity across different dimensions – tone, accuracy, boundary adherence, escalation behaviour. Traditional verification asks a binary question: did the agent comply? Fuzzy logic, pioneered by Lotfi Zadeh in 1965, gives us the mathematical framework to ask the far more useful question: to what degree did the agent comply?

This isn’t just a philosophical distinction. Graded BDI models, developed by Casali, Godo, and Sierra from 2005 onwards, replace the binary mental states of classical agent architectures with fuzzy membership functions on a continuous scale. An agent’s belief isn’t simply true or false – it has a degree. Its intention to follow a plan isn’t absolute – it can be measured. This formalism maps naturally onto LLM-based agents, whose outputs are inherently probabilistic, though the mapping requires careful engineering to be operationally meaningful rather than merely analogical.

In multi-agent systems, fuzzy logic becomes even more powerful. When multiple agents coordinate, they reach approximate consensus, not perfect agreement. Fuzzy consensus measures allow you to formally quantify how aligned a group of agents actually is – and to detect when that alignment is eroding over time. Compositional rules from fuzzy mathematics (sup-min composition, for instance) provide a framework for tracking how small imprecisions propagate through chains of agent interactions – directly relevant to the cascading prompt injection problem that Moltbook exposed.

Recent academic work has extended Fuzzy Computation Tree Logic with epistemic modalities to enable formal model checking of fuzzy multi-agent systems, demonstrating that classical verification can, in principle, handle the uncertainty inherent in real-world agentic deployments. Trust between agents can be modelled as a fuzzy quantity, enabling graduated trust that decays over time – a more realistic and useful framework than binary trust assumptions.

Model Checking: Mathematically Proving System Properties

Model checking – the algorithmic verification of whether a system satisfies a formal specification – earned Clarke, Emerson, and Sifakis the 2007 Turing Award. MCMAS, developed at Imperial College London, extends this to multi-agent systems, supporting the verification of temporal properties (“the system will always reach consensus”), epistemic properties (“no agent can act on information it shouldn’t have”), and strategic properties (“no coalition of agents can unilaterally cause an unsafe outcome”).

The intellectual lineage here is worth tracing. The temporal logic specifications that model checking verifies – statements about what must always be true, what will eventually become true, what holds until some condition is met – are formalised descendants of philosophical work on modal and temporal reasoning stretching back to Aristotle’s De Interpretatione and the Megarian and Stoic logicians of the Hellenistic period. The philosopher Arthur Prior’s development of tense logic in the 1950s and 1960s, which directly enabled the temporal logics used in model checking, was explicitly motivated by Aristotle’s puzzle about future contingents – the question of whether statements about the future can be true or false before the events they describe have occurred. The engineering is modern; the philosophical questions are ancient.

The challenge with LLM-based agents is significant and should not be understated: these agents have effectively infinite state spaces, and their behaviour is non-deterministic. You cannot model-check an LLM in the way you can model-check a protocol. But abstracted models of interaction protocols, tool-use workflows, and coordination patterns remain model-checkable. You can’t verify everything an LLM might say, but you can verify the structural properties of the system in which it operates – the guardrails, the escalation paths, the communication topology. This is a weaker guarantee than full verification of classical agents, but it is substantially better than no formal verification at all, which is where most deployments currently stand.

Byzantine Fault Tolerance: When Agents Can’t Be Trusted

The Byzantine Generals Problem, posed by Lamport, Shostak, and Pease in 1982, asks: how do you achieve reliable consensus in a system where some participants may behave arbitrarily – including lying, acting erratically, or being compromised? This maps onto multi-agent AI deployment in instructive, if imperfect, ways. An LLM that confidently produces incorrect output is, from a systems perspective, functionally similar to a compromised node: downstream agents and processes cannot easily distinguish reliable from unreliable outputs.

The philosophical resonance here is profound. The problem of establishing truth in the presence of potentially deceptive agents is precisely what the Socratic method was designed to address. Socrates’ technique of elenchus – cross-examination through systematic questioning – was not merely a pedagogical tool. It was an epistemic verification protocol for environments where interlocutors might hold false beliefs, reason from flawed premises, or (in the case of the Sophists, as Plato saw them) actively argue in bad faith. The medieval disputatio formalised this further, establishing structured adversarial protocols – the opponens and respondens taking turns to attack and defend propositions – that are structurally analogous to the adversarial testing regimes now being developed for multi-agent AI systems.

The analogy between Byzantine faults and philosophical scepticism is useful but has important limits. Classical Byzantine faults are assumed to be independent – one node’s failure is uncorrelated with another’s. LLM hallucinations are not independent in this way. Models trained on similar data, or even different models encountering similar edge cases, may fail in correlated ways. This means that naive redundancy – running the same query through multiple LLM instances and taking the majority vote – can create an illusion of reliability without the substance. True design diversity, using models with genuinely different architectures, training data, and failure profiles, is essential but difficult to achieve and verify in practice.

Recent research has begun applying Byzantine Fault Tolerance principles to LLM-based multi-agent systems, including work on weighting agent votes by confidence scores and using multiple model instances with design diversity to identify deviant responses. This is promising early work, but the classical mathematical guarantees – you need at least 3f+1 agents to tolerate f faulty ones – rest on independence assumptions that do not hold straightforwardly for LLM agents. The principles of BFT (assume failures will happen, design for consensus under adversarial conditions, require supermajority agreement) provide valuable engineering orientation. The specific numerical guarantees require careful adaptation.

Runtime Verification: Continuous Assurance, Not One-Time Testing

Perhaps the most practically applicable paradigm is runtime verification – monitoring actual agent behaviour against formal specifications during execution, rather than attempting to verify all possible behaviours before deployment. Unlike model checking, which is exhaustive but computationally expensive, runtime verification is lightweight, continuous, and designed for systems that evolve.

AgentGuard, published in 2025, implements exactly this for modern AI agents: a non-intrusive inspection layer that abstracts agent behaviour into formal events, builds an adaptive model of emergent behaviour, and provides dynamic probabilistic assurance – shifting from binary pass/fail to probability-of-failure quantification. This is arguably the right paradigm for LLM agents whose behaviour may shift with model updates, prompt changes, or new interaction patterns. It also sidesteps the state-space explosion problem that makes exhaustive model checking impractical for LLM-based systems, focusing instead on observed behaviour in real operating conditions.

From Theory to Practice: The Case for Agent Experience Verification

The concept of Agent Experience – AX – has emerged as a natural extension of the User Experience (UX) and Customer Experience (CX) disciplines into the agentic era. Just as organisations learned to design, measure, and optimise human interactions with their products, they now need to do the same for agent interactions. But AX without verification is branding without substance.

Consider the scale of the problem. At WPP, we have close to 30,000 AI agents deployed across media planning, content generation, and analytics. These agents interact with each other, with external platforms, with client data, and with human teams. Each one is operating in a multi-agent environment where its behaviour is shaped not just by its own specifications but by the outputs of every other agent it encounters. Verifying any single agent in isolation tells you almost nothing about how the system will behave.

What organisations actually need is continuous agent verification: the ability to test, certify, and monitor AI agents not just at deployment, but throughout their operational lifecycle. This means initial certification against formal behavioural specifications, continuous drift monitoring using graded metrics that capture graduated compliance rather than binary pass/fail, triggered re-verification when drift scores cross configurable thresholds, compositional reasoning that verifies system-level properties rather than just individual agent behaviour, and adversarial resilience testing informed by principles from Byzantine fault tolerance.

These are the principles we’ve tried to put into practice at Conscium with VerifyAX. As a case study in applying decades-old verification thinking to modern agents, the platform uses multi-agent simulation environments where non-player characters – borrowing from gaming’s approach to adversarial testing – probe agents across dimensions including accuracy, safety, alignment, fairness, and reliability. The underlying design philosophy draws directly from the traditions described above: treat compliance as a spectrum (from fuzzy logic), verify continuously rather than once (from runtime verification), and assume agents will drift (from everything we know about complex adaptive systems).

I should be transparent that I founded Conscium, and VerifyAX is one practical attempt at this translation work. But the broader point is not about any single tool or platform. It’s that the theoretical and conceptual foundations for rigorous agent verification already exist. They’ve been built, tested, published, and refined over three decades. The hard engineering work of adapting them to stochastic, LLM-based systems is ongoing – and it is genuinely hard. But we have a far richer starting point than most of the industry seems to realise.

The Stakes Are Higher Than Moltbook

Moltbook was, in a sense, a fortunate rehearsal. It was a low-stakes environment that surfaced high-stakes problems. Nobody lost money. No supply chains were disrupted. No customers received dangerous advice. The platform was vibe-coded by an entrepreneur who cheerfully admitted he hadn’t written a single line of code himself. It was, as one security researcher put it, what happens when trust, automation, and identity converge without sufficient visibility.

But enterprises are now deploying agents into contexts where the stakes are anything but experimental. Agents that negotiate contracts. Agents that manage millions in media spend. Agents that interact with customers in healthcare, financial services, and legal contexts. Agents that coordinate with other agents at machine speed, making decisions faster than any human can review. And increasingly, agents built by different organisations, running on different models, with different safety profiles – interacting in shared environments that no single party controls.

In these environments, the failure modes that Moltbook previewed – agent drift, identity manipulation, emergent adversarial behaviour, cascading prompt injection, the inability to distinguish genuinely autonomous behaviour from human-manipulated behaviour – become consequential. The EU AI Act’s requirements around transparency, risk assessment, and human oversight will apply to agentic systems, and organisations that lack formal verification infrastructure will find compliance extremely difficult to demonstrate.

The irony of this moment is that we are simultaneously living through the most rapid deployment of autonomous agents in history and underutilising the most relevant bodies of knowledge ever produced on how to make such systems safe. From Socrates’ method of structured interrogation to Aristotle’s formal logic, from Chrysippus’ propositional reasoning to the medieval protocols of adversarial disputation – and then from Carl Hewitt’s Actor Model to Michael Bratman’s theory of practical reasoning, from Leslie Lamport’s work on distributed consensus to Edmund Clarke’s model checking, from Lotfi Zadeh’s fuzzy logic to the agent architectures of Michael Wooldridge and Nick Jennings – these thinkers and many others spent careers building the conceptual and mathematical toolkit for exactly the challenges we now face. Their work isn’t historical curiosity. It’s a foundation we should be actively building on.

The Socratic insight – that truth is not established by assertion but by rigorous, structured, adversarial questioning – is as relevant to the verification of AI agents as it was to the philosophical schools of Athens. The Aristotelian project of formalising the rules of valid inference is the direct ancestor of the formal methods we need to ensure that agentic systems behave as specified. The philosophical tradition’s long engagement with the problems of knowledge, trust, deception, and reasoning under uncertainty provides a conceptual vocabulary that the agentic AI community urgently needs and largely lacks.

The organisations that will navigate the next three years successfully are those that recognise agentic AI is not a greenfield problem. It’s a problem with a rich, mature, and largely untapped body of foundational work – spanning not just three decades of computer science but three millennia of structured inquiry into how reasoning agents can and should operate. The philosophical tradition spent centuries refining the conceptual tools. The computer science community spent decades translating them into formal and computational frameworks. Adapting all of this to today’s stochastic, LLM-based agents is real work – not a trivial exercise, but not a moonshot either. The question is whether the industry is willing to invest in that translation, and whether the communities that built these foundations – from philosophy departments to distributed systems labs to multi-agent research groups – and the communities building today’s agents can find each other.